Marginal Fisher Analysis (MFA) is a supervised linear dimension reduction method. The intrinsic graph characterizes the intraclass compactness and connects each data point with its neighboring pionts of the same class, while the penalty graph connects the marginal points and characterizes the interclass separability.

Arguments

- X

an \((n\times p)\) matrix or data frame whose rows are observations.

- label

a length-\(n\) vector of data class labels.

- ndim

an integer-valued target dimension.

- preprocess

an additional option for preprocessing the data. Default is "center". See also

aux.preprocessfor more details.- k1

the number of same-class neighboring points (homogeneous neighbors).

- k2

the number of different-class neighboring points (heterogeneous neighbors).

Value

a named list containing

- Y

an \((n\times ndim)\) matrix whose rows are embedded observations.

- trfinfo

a list containing information for out-of-sample prediction.

- projection

a \((p\times ndim)\) whose columns are basis for projection.

References

Yan S, Xu D, Zhang B, Zhang H, Yang Q, Lin S (2007). “Graph Embedding and Extensions: A General Framework for Dimensionality Reduction.” IEEE Transactions on Pattern Analysis and Machine Intelligence, 29(1), 40–51.

Examples

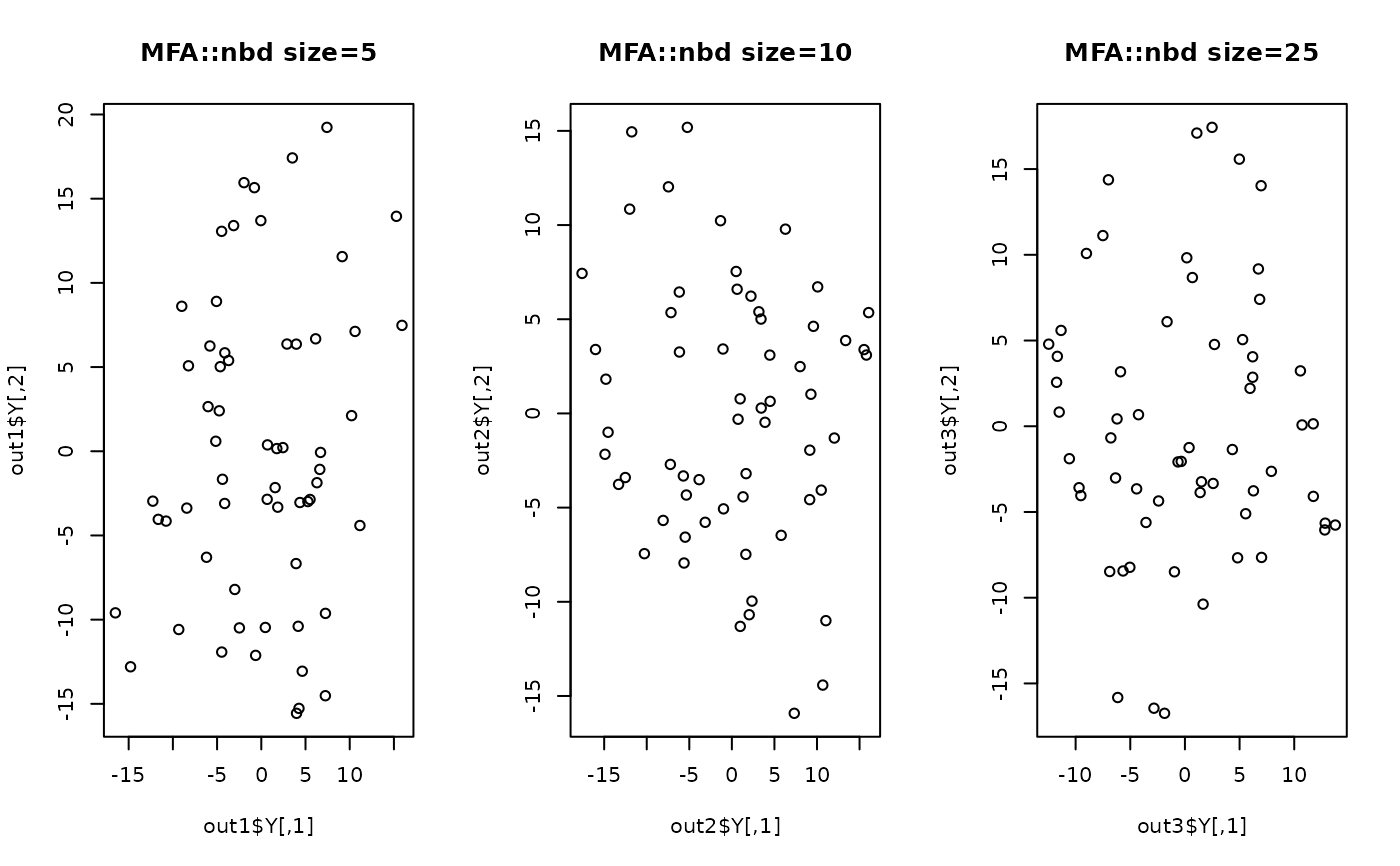

## generate data of 3 types with clear difference

dt1 = aux.gensamples(n=20)-100

dt2 = aux.gensamples(n=20)

dt3 = aux.gensamples(n=20)+100

## merge the data and create a label correspondingly

X = rbind(dt1,dt2,dt3)

label = rep(1:3, each=20)

## try different numbers for neighborhood size

out1 = do.mfa(X, label, k1=5, k2=5)

out2 = do.mfa(X, label, k1=10,k2=10)

out3 = do.mfa(X, label, k1=25,k2=25)

## visualize

opar <- par(no.readonly=TRUE)

par(mfrow=c(1,3))

plot(out1$Y, main="MFA::nbd size=5")

plot(out2$Y, main="MFA::nbd size=10")

plot(out3$Y, main="MFA::nbd size=25")

par(opar)

par(opar)