Locally Principal Component Analysis by Yang et al. (2006)

Source:R/linear_LPCA2006.R

linear_LPCA2006.RdLocally Principal Component Analysis (LPCA) is an unsupervised linear dimension reduction method. It focuses on the information brought by local neighborhood structure and seeks the corresponding structure, which may contain useful information for revealing discriminative information of the data.

Arguments

- X

an \((n\times p)\) matrix or data frame whose rows are observations and columns represent independent variables.

- ndim

an integer-valued target dimension.

- type

a vector of neighborhood graph construction. Following types are supported;

c("knn",k),c("enn",radius), andc("proportion",ratio). Default isc("proportion",0.1), connecting about 1/10 of nearest data points among all data points. See alsoaux.graphnbdfor more details.- preprocess

an additional option for preprocessing the data. Default is "center". See also

aux.preprocessfor more details.

Value

a named list containing

- Y

an \((n\times ndim)\) matrix whose rows are embedded observations.

- trfinfo

a list containing information for out-of-sample prediction.

- projection

a \((p\times ndim)\) whose columns are basis for projection.

References

Yang J, Zhang D, Yang J (2006). “Locally Principal Component Learning for Face Representation and Recognition.” Neurocomputing, 69(13-15), 1697–1701.

Examples

# \donttest{

## use iris dataset

data(iris)

set.seed(100)

subid = sample(1:150,100)

X = as.matrix(iris[subid,1:4])

lab = as.factor(iris[subid,5])

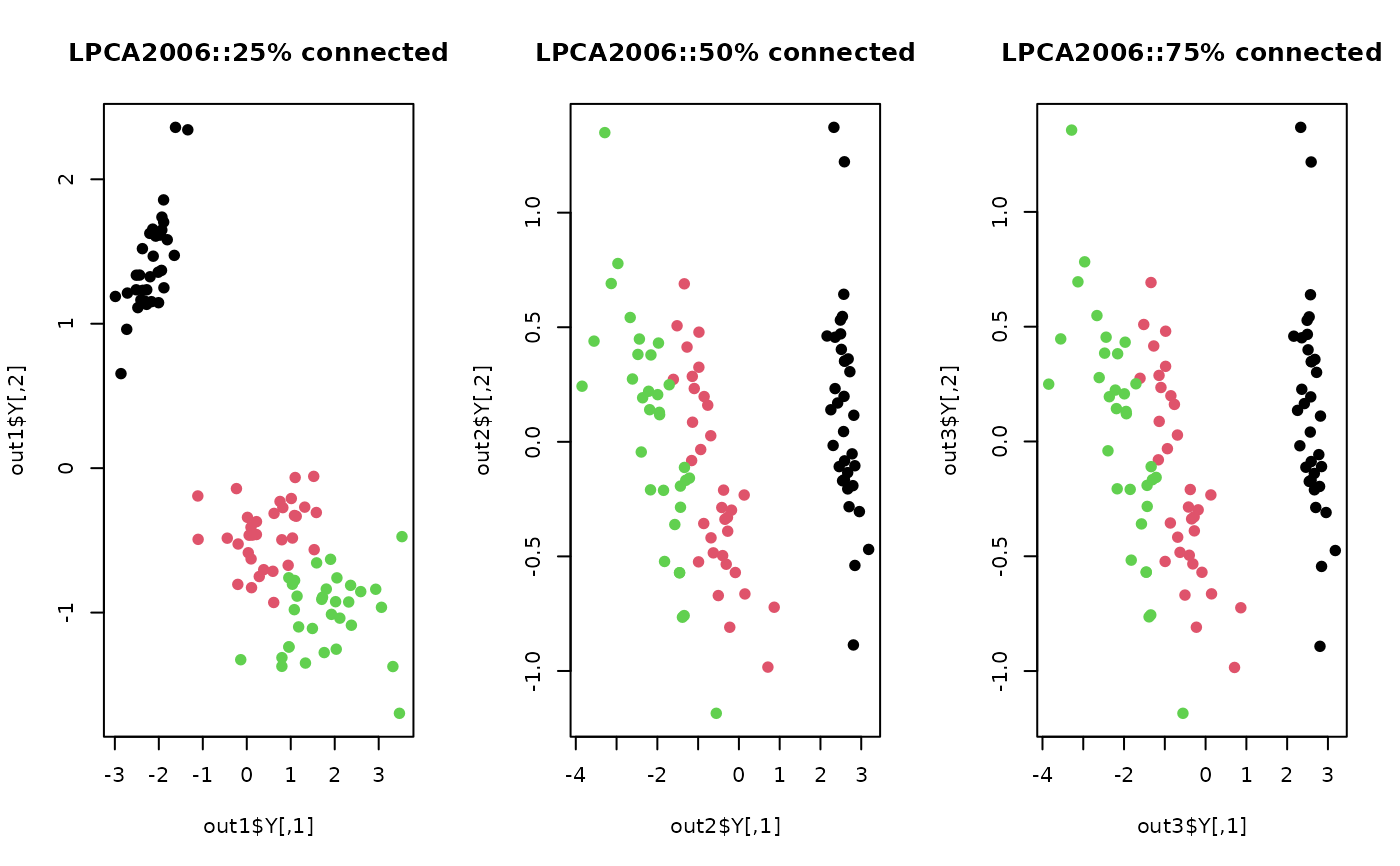

## try different neighborhood size

out1 <- do.lpca2006(X, ndim=2, type=c("proportion",0.25))

out2 <- do.lpca2006(X, ndim=2, type=c("proportion",0.50))

out3 <- do.lpca2006(X, ndim=2, type=c("proportion",0.75))

## Visualize

opar <- par(no.readonly=TRUE)

par(mfrow=c(1,3))

plot(out1$Y, pch=19, col=lab, main="LPCA2006::25% connected")

plot(out2$Y, pch=19, col=lab, main="LPCA2006::50% connected")

plot(out3$Y, pch=19, col=lab, main="LPCA2006::75% connected")

par(opar)

# }

par(opar)

# }