Laplacian Score (He et al. 2005) is an unsupervised linear feature extraction method. For each feature/variable, it computes Laplacian score based on an observation that data from the same class are often close to each other. Its power of locality preserving property is used, and the algorithm selects variables with smallest scores.

do.lscore(X, ndim = 2, ...)Arguments

- X

an \((n\times p)\) matrix or data frame whose rows are observations and columns represent independent variables.

- ndim

an integer-valued target dimension (default: 2).

- ...

extra parameters including

- preprocess

an additional option for preprocessing the data. See also

aux.preprocessfor more details (default:"null").- type

a vector of neighborhood graph construction. Following types are supported;

c("knn",k),c("enn",radius), andc("proportion",ratio). See alsoaux.graphnbdfor more details (default:c("proportion",0.1)).- t

bandwidth parameter for heat kernel in \((0,\infty)\) (default:

1).

Value

a named Rdimtools S3 object containing

- Y

an \((n\times ndim)\) matrix whose rows are embedded observations.

- lscore

a length-\(p\) vector of laplacian scores. Indices with smallest values are selected.

- featidx

a length-\(ndim\) vector of indices with highest scores.

- projection

a \((p\times ndim)\) whose columns are basis for projection.

- trfinfo

a list containing information for out-of-sample prediction.

- algorithm

name of the algorithm.

References

He X, Cai D, Niyogi P (2005). “Laplacian Score for Feature Selection.” In Proceedings of the 18th International Conference on Neural Information Processing Systems, NIPS'05, 507–514.

Examples

# \donttest{

## use iris data

## it is known that feature 3 and 4 are more important.

data(iris)

set.seed(100)

subid <- sample(1:150, 50)

iris.dat <- as.matrix(iris[subid,1:4])

iris.lab <- as.factor(iris[subid,5])

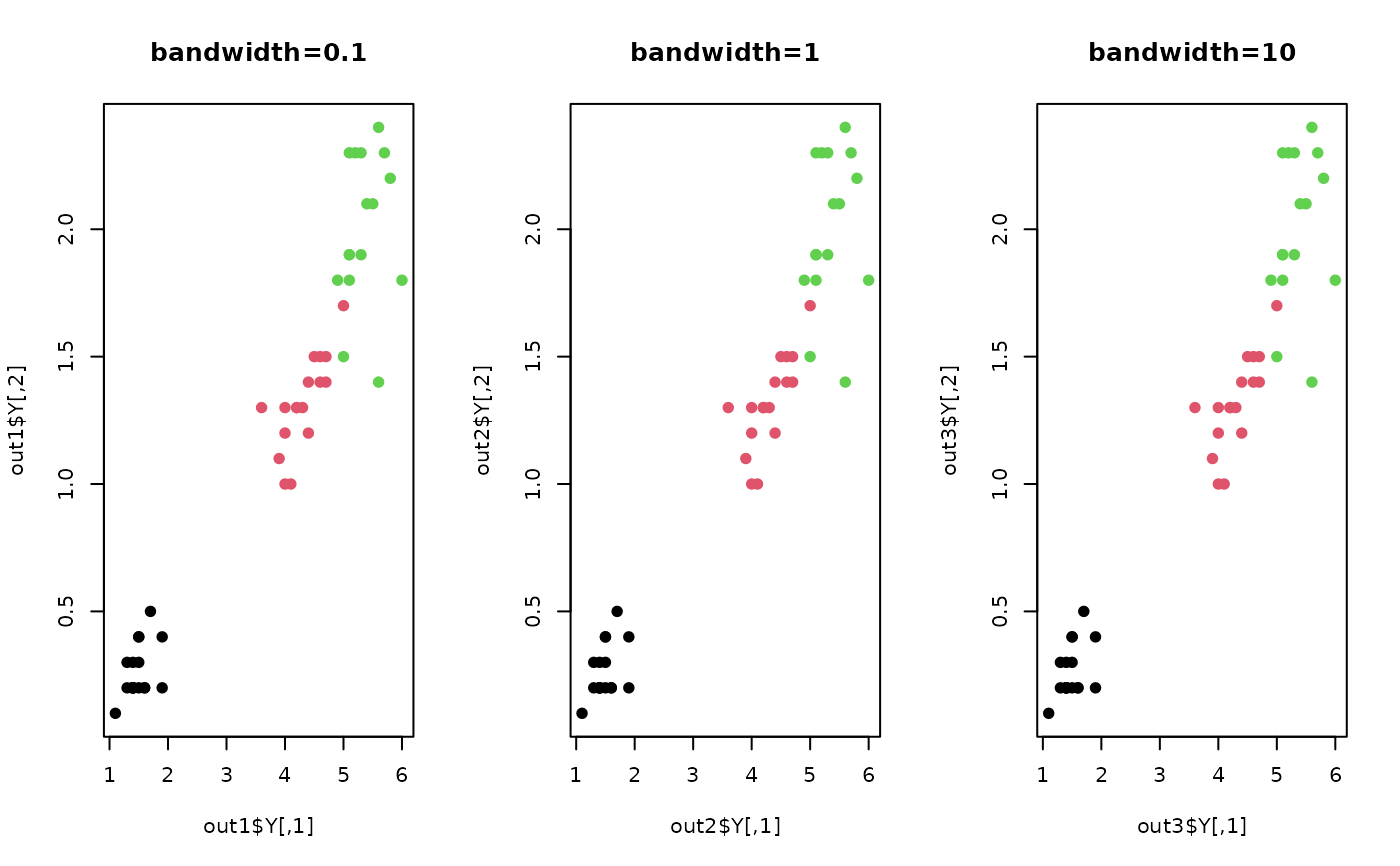

## try different kernel bandwidth

out1 = do.lscore(iris.dat, t=0.1)

out2 = do.lscore(iris.dat, t=1)

out3 = do.lscore(iris.dat, t=10)

## visualize

opar <- par(no.readonly=TRUE)

par(mfrow=c(1,3))

plot(out1$Y, pch=19, col=iris.lab, main="bandwidth=0.1")

plot(out2$Y, pch=19, col=iris.lab, main="bandwidth=1")

plot(out3$Y, pch=19, col=iris.lab, main="bandwidth=10")

par(opar)

# }

par(opar)

# }